At CES 2026 in Las Vegas, NVIDIA CEO Jensen Huang delivered a groundbreaking keynote, declaring the arrival of the “ChatGPT moment for physical AI.” The spotlight shone brightly on the NVIDIA Rubin Platform, the company’s next-generation AI supercomputer architecture succeeding the record-breaking Blackwell series. Named after pioneering astronomer Vera Rubin, this platform represents NVIDIA’s most ambitious push yet into extreme co-design, integrating six revolutionary chips to power the future of agentic AI, robotics, autonomous vehicles, and trillion-parameter models.

With the Rubin platform now in full production and systems slated for deployment in the second half of 2026, NVIDIA is positioning itself to dominate the exploding demand for AI inference and training. This announcement sent ripples through the tech world, targeting gamers awaiting hints of consumer GPUs (none came), developers hungry for open models, and AI enthusiasts eager for the next performance frontier.

Jensen Huang’s CES 2026 Keynote: A Vision for Physical AI Dominance

Huang’s nearly two-hour presentation at the Fontainebleau Las Vegas was a masterclass in forward-thinking narration. He began by honoring Vera Rubin, the astronomer who provided evidence for dark matter, drawing parallels to how NVIDIA’s platform will uncover new “dark” frontiers in AI scalability.

“The era of physical AI is here,” Huang proclaimed, emphasizing how AI is transitioning from digital chatbots to machines that perceive, reason, and act in the real world. He showcased adorable robotic companions on stage—autonomous droids powered by NVIDIA’s Cosmos AI—waddling and interacting in real-time, illustrating the seamless blend of simulation and reality.

Key themes included:

- Scaling AI into every domain and device.

- The explosive growth of agentic AI and mixture-of-experts (MoE) models.

- Open models as the foundation for global innovation.

- Partnerships driving real-world deployment, from cloud giants to automotive leaders.

Huang’s charisma turned complex tech into an exciting story: AI isn’t just computing anymore—it’s thinking, moving, and transforming industries.

Rubin vs. Blackwell: A 5x Performance Leap and Dramatic Cost Reductions

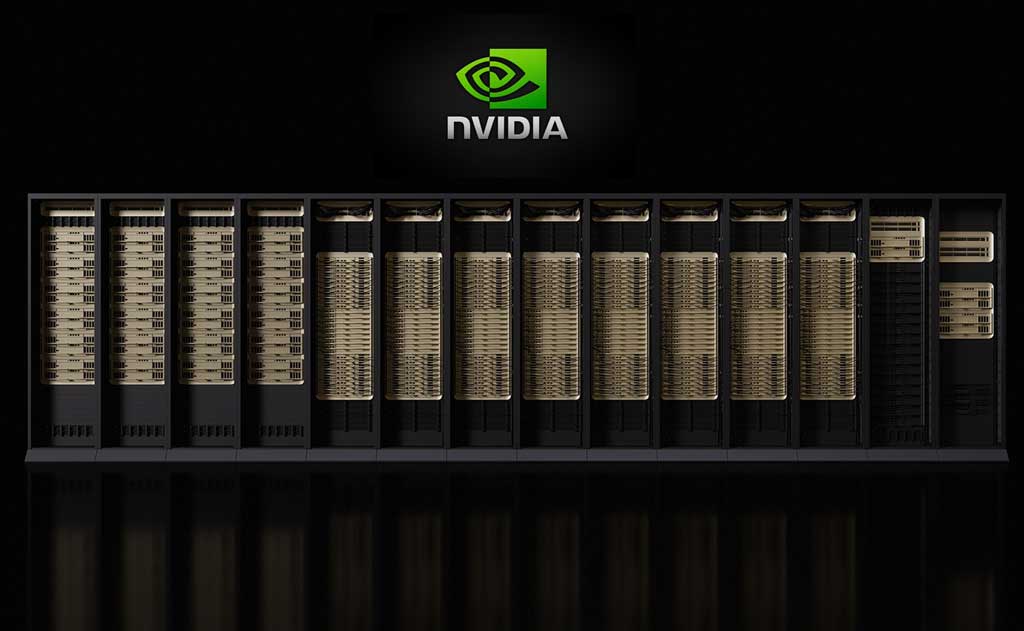

The star of the show was the Rubin Platform, NVIDIA’s first “extreme co-designed” six-chip AI supercomputer. This isn’t just a new GPU—it’s a holistic system built from the data center outward to eliminate bottlenecks.

At its core:

- Vera Rubin Superchip: Combines one Vera CPU and two Rubin GPUs in a single processor.

- Rubin GPU: Dual reticle-sized dies on TSMC’s 3nm process, packing 336 billion transistors (1.6x Blackwell).

- Supporting chips: NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and Spectrum-6 Ethernet Switch.

Performance highlights compared to Blackwell:

- Up to 5x higher inference performance (50 PFLOPS NVFP4 vs. Blackwell’s 10 PFLOPS).

- 3.5x training performance (35 PFLOPS NVFP4).

- 2.8x more memory bandwidth with next-gen HBM4 (up to 288GB per GPU, 22 TB/s).

- 10x lower cost per token for inference in MoE models.

- 4x fewer GPUs needed to train the same MoE model.

The flagship Vera Rubin NVL72 rack packs 72 Rubin GPUs and 36 Vera CPUs, delivering 3.6 EFLOPS inference and massive scale-up bandwidth—described by Huang as providing “more bandwidth than the entire internet.”

Why the leap? Third-generation Transformer Engine with adaptive compression, AI-native KV-cache storage (5x tokens/second, 5x efficiency), and rack-scale trusted computing for secure frontier models.

Rubin arrives amid skyrocketing AI demand, slashing costs while boosting efficiency—perfect for trillion-parameter reasoning models and agentic AI that “think” through multi-step tasks.

Alpamayo: Revolutionizing Autonomous Driving with Open Reasoning Models

Huang introduced NVIDIA Alpamayo, an open family of reasoning-based AI models, simulation tools, and datasets for Level 4 autonomous vehicles. This is NVIDIA’s bold entry into “thinking” self-driving tech, solving the “long-tail” problem of rare, complex scenarios.

Key components:

- Alpamayo 1: A 10-billion-parameter Vision-Language-Action (VLA) model with chain-of-thought reasoning—vehicles now “think” step-by-step, explain decisions, and handle edge cases like traffic light outages.

- AlpaSim: Open-source simulation framework for high-fidelity testing.

- Physical AI Datasets: Over 1,700 hours of multi-sensor driving data across geographies.

Alpamayo powers “AI-defined driving,” debuting in the all-new Mercedes-Benz CLA—rated the “safest car in the world” with Euro NCAP five stars. Rollout starts in the U.S. soon, expanding globally.

Impact on autonomous vehicles: Safer, more explainable AVs. Early adopters include Lucid, Jaguar Land Rover, Uber, and more. NVIDIA plans its own robotaxi service testing by 2027.

This open approach positions NVIDIA as the “Android of autonomy,” accelerating industry-wide progress while tying developers to its ecosystem.

Open Models Powering Physical AI in Robotics and Healthcare

NVIDIA doubled down on openness, releasing a vast portfolio of foundation models trained on its supercomputers:

- Cosmos Platform: World foundation models for synthetic data generation, prediction, and reasoning in physical environments.

- Isaac GR00T N1.6: Open VLA model for humanoid robots, enabling whole-body control and advanced manipulation.

- Clara for Healthcare: Models accelerating biomedical AI, from drug discovery to surgical robotics.

Partners like Boston Dynamics, Franka Robotics, LG, and Caterpillar unveiled next-gen robots powered by these models. In healthcare, LEM Surgical and XRlabs use Isaac for autonomous surgical arms and real-time analysis.

Huang demoed personalized AI agents on DGX Spark desktops, embodied in robots—turning open models into responsive physical collaborators.

This ecosystem—spanning Nemotron (agentic AI), Earth-2 (climate), and more—empowers developers to fine-tune and deploy, fostering innovation while ensuring NVIDIA hardware remains indispensable.

Why Rubin and Physical AI Matter: The Broader Impact on Agentic AI and Beyond

Rubin isn’t just hardware—it’s the engine for agentic AI: systems that plan, reason, and execute multi-step tasks autonomously. With 10x token cost reductions, trillion-parameter models become feasible, unlocking breakthroughs in:

- Robotics: Humanoids in factories, homes, and warehouses.

- Autonomous Vehicles: Safer robotaxis and personal cars.

- Healthcare: Precision surgery and personalized medicine.

- Enterprise: Efficient MoE models routing queries to specialized “experts.”

Cloud providers like AWS, Google Cloud, Microsoft, and CoreWeave will deploy Rubin first, with Microsoft integrating into Fairwater AI superfactories.

For gamers and developers: While no RTX news dropped, Rubin’s tech will trickle down, enhancing simulation, ray tracing, and AI upscaling in future generations.

The Future According to NVIDIA: A Multi-Trillion Opportunity

Huang closed with optimism: Physical AI will redefine industries, creating trillions in value. NVIDIA’s annual cadence—Hopper to Blackwell to Rubin—ensures relentless innovation.

As searches for “NVIDIA Rubin CES 2026,” “Vera Rubin GPU,” “NVIDIA Alpamayo autonomous driving,” and “physical AI CES 2026” surge, one thing is clear: NVIDIA isn’t just riding the AI wave—it’s building the ocean.

Ethan Brooks covers the tech that’s reshaping how we move, work, and think — for VFuture Media. He was at CES 2026 in Las Vegas when the world got its first real look at humanoid robots, AI-powered vehicles, and Samsung’s tri-fold phone. He writes about AI, EVs, gadgets, and green tech every week. No hype. No filler. X · Facebook

Stay tuned to VFutureMedia for in-depth coverage of CES 2026, AI advancements, robotics breakthroughs, and the evolving world of physical AI.

Leave a Comment