Atlanta, GA – May 16, 2026 – In a move that continues to set it apart from Big Tech peers, X (formerly Twitter) has repeatedly open-sourced major portions of its recommendation algorithm — most recently in January 2026 with ongoing updates under the xAI umbrella. While Elon Musk’s platform publishes code on GitHub every four weeks, complete with developer notes, the rest of the social media world guards its algorithms like crown jewels.

The question echoes across forums, regulatory hearings, and creator communities: Why don’t the others do the same?

X’s Transparency Play: Bold Bet or Calculated Theater?

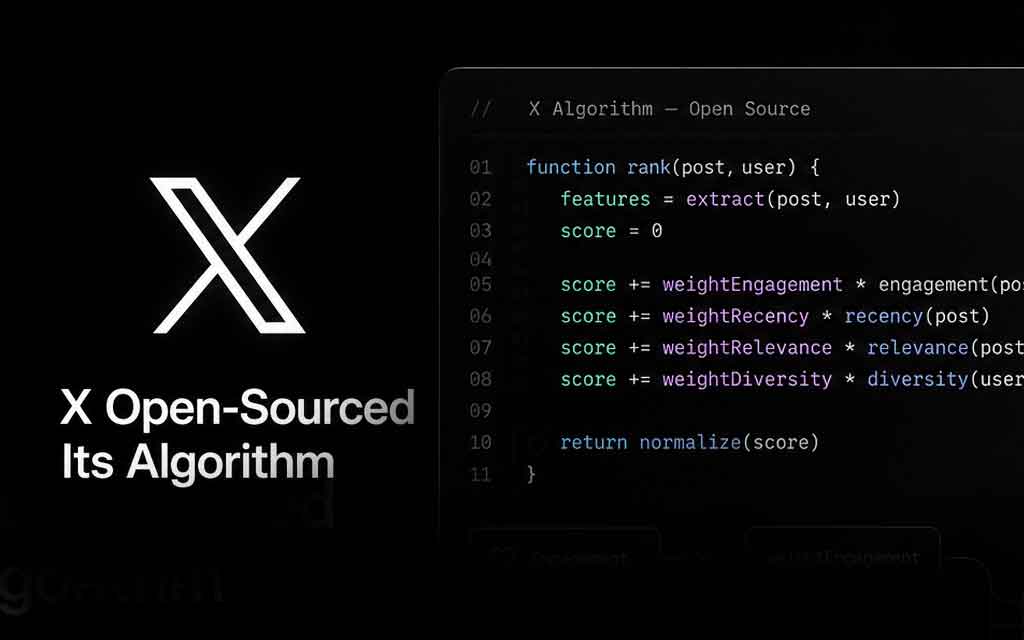

When Elon Musk acquired Twitter in 2022, he promised radical openness. In 2023 the company released parts of its recommendation system. In early 2026, X went further by open-sourcing its updated “For You” feed architecture — powered increasingly by Grok models — under an Apache 2.0 license. The code covers candidate sourcing, ranking signals (engagement, dwell time, author diversity), spam filtering, and ad blending.

Musk framed the releases candidly: “We know the algorithm is dumb and needs massive improvements, but at least you can see us struggle to make it better in real-time.” Supporters praise the move for building trust and inviting community contributions. Critics call it “transparency theater,” noting that training data, full neural network weights, moderation blacklists, and certain visibility filters often remain opaque or redacted.

Still, X stands alone among major platforms in publishing production-level recommendation code.

The Competitive Moat: Why Secrecy Reigns Supreme

1. Intellectual Property & Competitive Advantage Recommendation algorithms are the core engine of modern social media. They determine what keeps users scrolling — and therefore what generates ad revenue. Platforms like Meta (Facebook + Instagram), ByteDance (TikTok), Google (YouTube), and Reddit view their finely tuned systems as proprietary technology worth billions. Open-sourcing would hand competitors — and copycat startups — the blueprint for free.

Meta has shared high-level research papers and blog posts about its AI ranking systems but never production code. YouTube publishes creator-focused “algorithm tips” while keeping the actual models under wraps. TikTok offers vague explanations in its Transparency Center and has discussed limited oversight in regulatory negotiations, but ByteDance has fiercely protected the underlying code.

2. Security, Gaming, and Abuse Risks Full transparency creates immediate vulnerabilities. Bad actors could reverse-engineer systems to maximize reach for spam, hate speech, disinformation campaigns, or coordinated inauthentic behavior. Researchers note that even partial releases can be exploited; complete open-sourcing would likely accelerate sophisticated manipulation at scale.

Platforms also worry about “algorithmic gaming” by creators and marketers. Once the exact weighting of signals (likes, comments, watch time, negative feedback) is public, optimization becomes an arms race that could degrade feed quality.

3. Regulatory and Legal Exposure Greater openness invites deeper scrutiny. Regulators in the EU (under the Digital Services Act), U.S. lawmakers, and governments worldwide already pressure platforms over bias, addiction, polarization, and child safety. Releasing code could fuel lawsuits, audits, and demands for constant tweaks. Most companies prefer controlled transparency reports and selective researcher access over full public disclosure.

4. Business Model Protection Engagement is revenue. Algorithms are tuned not just for relevance but for maximum time-on-site and ad views. Revealing the precise machinery could expose uncomfortable truths about how platforms prioritize sensational or divisive content. It might also undermine premium features or ad-targeting edges.

Limited Transparency Efforts Elsewhere

- Meta (Facebook, Instagram, Threads): Shares AI research, offers user tools to reset recommendations, and runs limited researcher APIs. No production algorithm code.

- YouTube: Publishes creator guidelines and some ranking signals but treats the core recommender as trade secret.

- TikTok: Operates Transparency Centers and has proposed third-party monitoring in U.S. negotiations, yet the algorithm remains closely held.

- Reddit: Relies heavily on community voting and has discussed some ranking factors publicly but keeps machine-learning enhancements proprietary.

No major rival has matched X’s level of code release as of mid-2026.

The Broader Debate: Trust vs. Control

Proponents of open algorithms argue it would reduce echo chambers, enable independent audits, and democratize platform governance. Critics counter that true accountability requires more than code — it needs insight into training data, human oversight, and real-world outcomes.

As AI-powered recommendation systems grow more sophisticated (and opaque even to their own engineers), the gap between X’s approach and industry norms highlights a philosophical divide: one platform betting on radical transparency to rebuild trust, the others prioritizing control, safety, and competitive edge.

Whether X’s strategy delivers better products and user confidence — or simply invites more scrutiny — remains an unfolding experiment. For now, it stands as the exception proving how tightly the rest of social media clings to its algorithmic black boxes.

What’s your take? Should regulators force all major platforms to open-source their recommendation algorithms? Drop your thoughts in the comments.

VFuturMedia.com – Tracking the future of social media, AI, and digital transparency.

Leave a Comment