By VFuture Media Team | April 21, 2026 | 10 min read

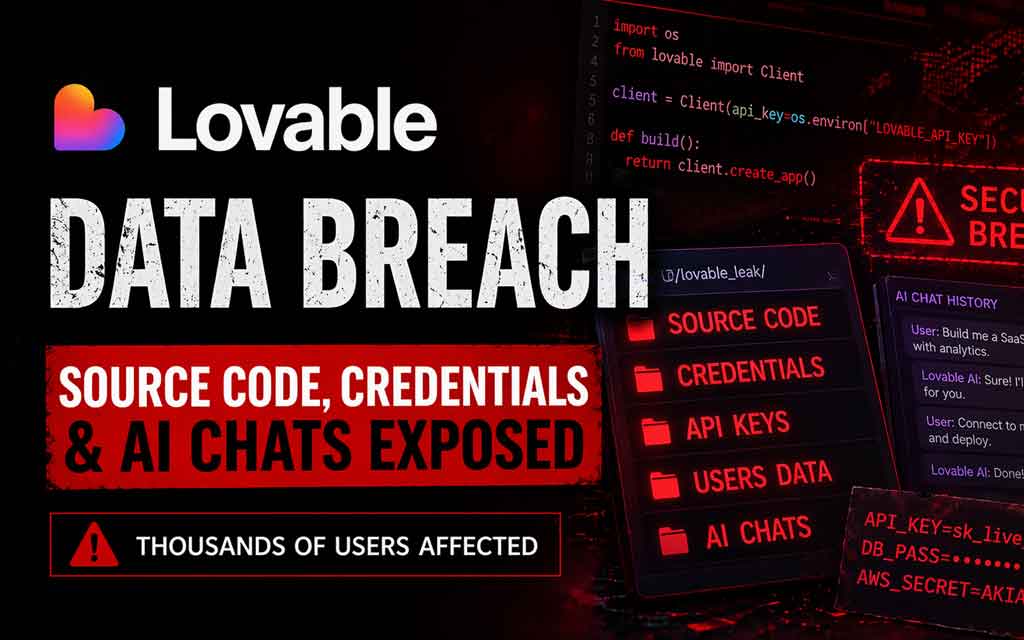

A major security incident has rocked the AI coding world. The popular vibe-coding platform Lovable faced intense scrutiny after a researcher revealed that anyone with a free account could access other users’ AI chat histories, source code, database credentials, and customer data. The flaw, linked to projects created before November 2025, has sparked widespread debate about security in AI-powered app-building tools.

At VFuture Media, we cover the latest AI news, AI models, startup developments, and the real-world risks of rapid AI adoption. This incident highlights the growing security challenges in the “vibe coding” era, where natural language prompts generate full applications — often without traditional code reviews. Here’s a complete breakdown of the Lovable incident, the company’s response, technical details, and critical takeaways for developers and businesses.

What Exactly Happened? The Researcher’s Discovery

On April 20, 2026, security researcher @weezerOSINT (also referred to as “Impulsive”) posted detailed findings on X. After creating a free Lovable account, the researcher claimed they could access sensitive information from other users’ projects with just five simple API calls — no advanced hacking required.

Exposed data reportedly included:

- Complete project source code

- Database credentials (including Supabase keys and service role keys)

- Full AI chat histories — where users discuss app logic, paste error logs, share schemas, and sometimes include sensitive details or PII

- Customer records and other project-related data

The issue primarily affected projects created before November 2025, when Lovable reportedly introduced changes to permissions. The researcher noted that employees from major companies like Nvidia, Microsoft, Uber, and Spotify had accounts on the platform, raising concerns about potential enterprise-level exposure.

The vulnerability was identified as a classic Broken Object Level Authorization (BOLA) flaw — ranked #1 on the OWASP API Security Top 10. In simple terms, Lovable’s API verified that a user was logged in but failed to check whether the requested project actually belonged to that user.

Lovable’s Initial Response and Backlash

Lovable, a Stockholm-based startup valued at over $6 billion, quickly responded on X. The company initially denied suffering a data breach, stating:

“To be clear: We did not suffer a data breach. Our documentation of what ‘public’ implies was unclear, and that’s a failure on us.”

They attributed the visibility to public project settings and claimed the behavior was partly intentional for projects marked as public. However, critics pointed out that many users may not have fully understood the implications of “public” visibility, especially regarding embedded credentials and private chat histories.

Later updates from Lovable admitted that while they had stopped making chats public by default in December 2025, a backend permission unification in February 2026 accidentally re-enabled access to chats on public projects. This admission shifted the narrative from “intentional design” to an operational oversight.

The company faced significant backlash for the initial defensive tone. Many in the developer community argued that downplaying the issue as a documentation problem ignored the real risks of exposed secrets and sensitive conversations with AI models.

Technical Deep Dive: How the Flaw Worked

Lovable’s platform allows users to describe apps in natural language (“vibe coding”), with AI generating the full-stack code, database schemas, and deployment. Projects can be set to public or private.

According to the researcher:

- The API endpoint for fetching project data performed authentication checks but skipped proper ownership authorization.

- Once a project ID was known (or guessed/enumerated), any authenticated user could retrieve its full contents.

- Source code often contained hardcoded or exposed credentials (e.g., Supabase service_role keys that bypass Row Level Security).

- AI chat histories — valuable for understanding app intent — sometimes included pasted secrets, business logic, or user data.

This isn’t the first security concern for Lovable. Earlier in 2026, researchers found critical vulnerabilities in Lovable-hosted apps, including one EdTech application that exposed over 18,000 user records due to AI-generated code lacking basic authorization checks.

The broader trend? Studies show that AI-generated (“vibe-coded”) apps have significantly higher rates of critical vulnerabilities compared to traditionally written code, with issues like missing input validation, improper auth, and exposed secrets being common.

Implications for Users and the AI Coding Ecosystem

If you’ve built projects on Lovable:

- Immediate actions recommended: Review all projects for exposed credentials. Rotate any database keys, API tokens, or secrets that may have been in source code or chats. Audit deployed applications for similar authorization gaps.

- Risk of exploitation: Exposed database credentials could allow attackers to access or modify production data. AI chat histories might reveal intellectual property or sensitive business discussions.

- Reputation and compliance: For teams handling user data, this could trigger GDPR or other regulatory concerns, especially if PII appeared in chats.

The incident underscores risks in the fast-growing vibe-coding space. Platforms like Lovable, Bolt.new, and others promise speed and accessibility, but rapid generation without rigorous security review can lead to production vulnerabilities. Broader scans of AI-built apps have revealed thousands of high-impact issues, including exposed secrets and weak authorization.

Lovable’s Context in the AI Startup Landscape

Lovable has gained massive traction as one of Europe’s hottest AI startups, enabling non-technical users and developers to build functional web apps quickly using advanced AI models (likely leveraging models like Claude or GPT variants for code generation).

Its high valuation reflects investor excitement around agentic AI and natural language development tools. However, this event highlights a key tension: the same AI capabilities that accelerate building can amplify security risks if not paired with strong platform-level safeguards, default secure configurations, and clear user education.

Lessons for AI Builders, Developers, and Enterprises in 2026

- Never trust “public” by default — Always assume sensitive data (code, chats, credentials) could be accessible unless explicitly private with proper enforcement.

- Review AI-generated code rigorously — AI excels at patterns but often misses security edge cases like proper authorization or secret handling.

- Implement and test authorization at every layer — BOLA-style flaws are preventable with strict ownership checks.

- Secure your AI conversations — Treat chats with AI coding assistants as potentially sensitive; avoid pasting real credentials or PII.

- Rotate secrets proactively — If a platform exposes data, assume compromise and rotate everything.

- Demand better defaults from AI tools — Platforms should enforce private-by-default for code and chats, with clear warnings about visibility.

This incident adds to growing discussions around AI security and responsible deployment of frontier AI models in developer tools. As tools become more powerful, platform providers must prioritize security engineering alongside generative capabilities.

The Road Ahead: Strengthening Security in Vibe Coding

Lovable has indicated it is addressing the documentation and permission issues. The broader industry should use this as a wake-up call to invest in automated security scanning for AI-generated code, better permission models, and transparency around data handling.

For users, the message is clear: speed is valuable, but not at the expense of basic security hygiene. Combining AI models for rapid development with human oversight and security best practices remains essential in 2026.

What’s your experience? Have you built projects on Lovable or similar vibe-coding platforms? How do you handle security when using AI code generation tools? Share your thoughts and any mitigation steps in the comments below.

Stay informed on the latest AI news, AI models breakthroughs, EV updates, and startup security incidents. Explore more on VFuture Media:

Subscribe to the VFuture Media newsletter for weekly roundups of AI developments, security alerts, and technology insights.

Sources: Official statements from Lovable, researcher disclosures on X, reports from The Register, Cybernews, Sifted, and industry analyses as of April 21, 2026.

Leave a Comment