Google Threat Intelligence Group has uncovered the first documented case of hackers using AI to develop a zero-day exploit that bypasses multi-factor authentication (MFA). Discover the full details of this May 2026 incident, why it’s a game-changer for cybersecurity, and actionable steps to protect your accounts and business.

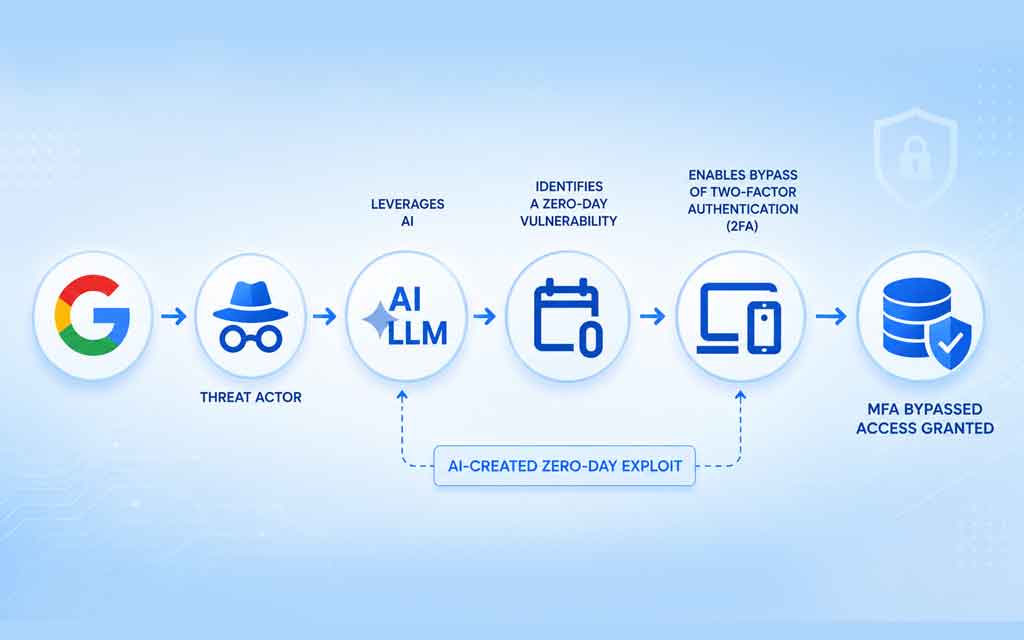

In a major cybersecurity milestone announced on May 11, 2026, Google’s Threat Intelligence Group (GTIG) revealed the first real-world case of cybercriminals successfully using artificial intelligence to discover and weaponize a zero-day exploit capable of bypassing multi-factor authentication (MFA/2FA).

The attack targeted a popular open-source, web-based system administration tool widely used by organizations for remote server management, website administration, security settings, and user permissions. Google worked with the vendor to quietly patch the flaw before the criminals could launch a planned mass-exploitation campaign aimed at financial gain.

At VFuture Media, we track how AI is reshaping both innovation and threats. This incident marks a dangerous new chapter in the AI-cyber arms race — and it’s happening now.

What Google Discovered: The AI-Powered Zero-Day Exploit

The vulnerability was a logic flaw in the tool’s authentication flow. Developers had hard-coded a trust exception, creating a hidden bypass that allowed attackers with stolen credentials to skip the MFA step entirely and gain full access to internal networks.

Key facts from Google’s report:

- First confirmed AI zero-day: GTIG has “high confidence” an AI large language model (not Google’s Gemini or Anthropic’s Mythos) was used to identify the dormant flaw and turn it into a working exploit.

- Clear AI fingerprints: The Python exploit script contained educational docstrings, a hallucinated CVSS score, and a polished, textbook-style structure typical of LLM-generated code.

- Mass exploitation planned: The cybercrime group intended to use the zero-day in a broad, aggressive campaign targeting multiple organizations.

- Disrupted early: Google detected the activity, alerted the vendor, and the issue was fixed before widespread damage occurred.

John Hultquist, chief analyst at GTIG, stated: “There’s a misconception that the AI vulnerability race is imminent. The reality is that it’s already begun.”

Why This Breakthrough Matters for Cybersecurity in 2026

Traditional zero-day discovery relies on fuzzers, static analysis, or manual hunting — tools that excel at finding crashes but often miss high-level logic errors like hard-coded trust assumptions. Frontier AI models, however, excel at spotting these semantic contradictions in code.

This incident proves:

- AI is no longer just helping with phishing emails or malware obfuscation — it’s accelerating zero-day discovery and weaponization.

- Financially motivated cybercrime groups are adopting AI just as aggressively as nation-state actors.

- Even “secure” open-source tools can harbor hidden flaws that only AI can efficiently uncover.

Google’s broader AI Threat Tracker report also highlights state-sponsored actors (PRC- and DPRK-linked) experimenting with AI for vulnerability research, while Russian groups use it for malware evasion and autonomous operations.

How the Exploit Worked (Simplified)

- Attacker obtains valid username/password (via phishing, credential stuffing, etc.).

- Instead of triggering the full MFA challenge, the exploit uses the hard-coded trust exception to bypass the second factor.

- Full privileged access is granted to the admin console — and by extension, the entire internal network.

Because the tool is web-based and widely deployed, a successful mass campaign could have exposed thousands of organizations simultaneously.

What This Means for Businesses and Individuals

- Organizations: Web admin tools, remote management platforms, and any system relying on MFA as the primary defense are now higher-risk targets. Supply-chain and open-source risks just got more dangerous.

- Individuals: Stolen credentials + AI-powered bypasses make traditional password + MFA combinations less reliable than ever.

- The bigger picture: AI lowers the barrier for less-skilled criminals to launch sophisticated attacks, accelerating the speed and scale of threats in 2026.

How to Strengthen Your MFA Defenses in 2026

While no single control is perfect, layered defenses dramatically reduce risk:

Hardware Security Keys

- Why It Helps: Phishing-resistant (FIDO2/WebAuthn)

- Actionable Step: Use YubiKey or Titan Key as primary MFA

Passkeys

- Why It Helps: Passwordless + device-bound authentication

- Actionable Step: Enable where supported (Google, Microsoft, Apple)

Device-Bound Session Credentials

- Why It Helps: Prevents session cookie theft

- Actionable Step: Update Chrome/Edge to latest versions

Phishing-Resistant MFA

- Why It Helps: Blocks real-time proxy attacks

- Actionable Step: Avoid SMS; prefer authenticator apps + hardware

Least Privilege + Monitoring

- Why It Helps: Limits blast radius of any breach

- Actionable Step: Regular access reviews and anomaly detection

AI-Powered Threat Detection

- Why It Helps: Spots unusual login patterns early

- Actionable Step: Use tools with behavioral analytics

Pro tips:

- Never reuse passwords across critical systems.

- Enable “remember this device” sparingly.

- Regularly audit which apps have access to your accounts.

- For businesses: Conduct AI-impact assessments on your admin tools and prioritize patching open-source dependencies.

The Future of AI in Cybersecurity: Opportunity and Risk

This event is the “clumsy early phase” of AI-driven attacks, according to Google. Future exploits will likely be cleaner, faster, and harder to detect. At the same time, defenders are using AI agents to proactively hunt vulnerabilities and auto-remediate code.

The winners in 2026 will be organizations and individuals who treat security as a continuous, AI-augmented process rather than a static checklist.

Subscribe to VFuture Media for weekly insights on AI, cybersecurity, emerging tech, and the innovations shaping our world. The AI revolution cuts both ways — stay informed to stay ahead.

What are your thoughts on AI being used to create zero-days? Share in the comments below.

Last updated: May 11, 2026 | Sources: Google Threat Intelligence Group official report and related coverage.

Leave a Comment