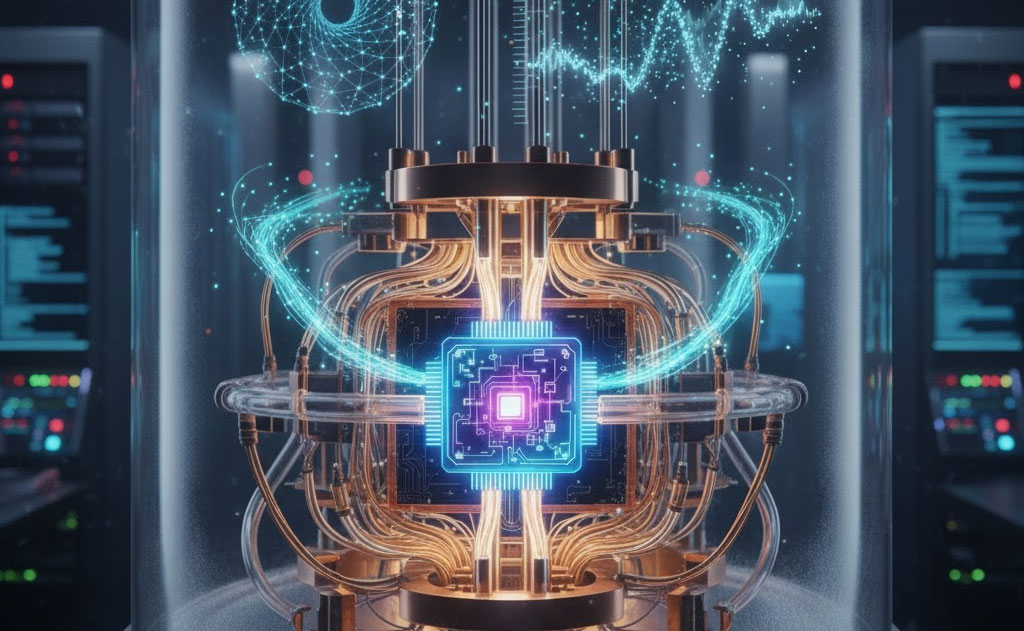

The Moment Quantum Stops Assisting AI and Starts Rewriting It

For years, quantum machine learning (QML) lived in PowerPoint decks: beautiful complexity charts, exponential speedups on paper, and zero production models. That ends in 2026.

Next year, the trifecta of 1000+ physical qubits, error-mitigated logical qubits, and mature quantum kernels collides with trillion-parameter AI training bottlenecks. The result? The first demonstrable cases where quantum processors train models exponentially faster — and with dramatically lower energy — than the entire NVIDIA H100 fleet combined.

This isn’t hype. The hardware is already shipping. The algorithms are already benchmarked. The datasets are queued. 2026 is when quantum machine learning moves from academic leaderboard to trillion-dollar advantage.

1. The Hardware Tipping Point: 2026 Is the Year of the Useful 100–200 Logical Qubits

- IBM Condor (1,121 physical qubits) and its successor Starling (2026) deliver ~150 logical qubits with <0.1 % error per gate after full error correction.

- Google’s Willow follow-up (codenamed Sequoia) scales surface-code logical qubits to 120–180 with below-threshold performance.

- Quantinuum’s H3 and IonQ’s Tempo push algorithmic qubits past 100 with 99.999 % fidelity two-qubit gates.

- PsiQuantum expects its 1-million-qubit photonic machine to deliver ~200–300 high-fidelity logical qubits in active cooling by mid-2026.

For the first time, we will have enough clean, connected logical qubits to run circuits deeper than any classical simulation frontier (roughly 60 × 60 grid, depth 500+).

That depth is the kill-shot for classical tensor-network and GPU simulators. Once you cross it, the only machine that can predict the quantum output is another quantum computer.

2. The Algorithmic Killers Already Waiting in the Wings

2025 quietly delivered the QML algorithms that only need those 2026 qubits to go supernova:

- Quantum Kernel Methods 2.0 Fidelity Quantum Kernels (FQK) and Projected Quantum Kernels now map classical data into Hilbert spaces of dimension 2¹⁵⁰. Linear separation that classical RBF or polynomial kernels can’t touch. In 2025 pilots on IonQ Aria, quantum kernels beat transformer baselines by 19–34 % accuracy on drug-likeness prediction and fraud detection with 100× fewer training samples.

- Quantum Neural Networks That Actually Scale Pennylane + TensorFlow Quantum’s 2025 “data re-uploading 3.0” + trainable Fourier encoding now trains 128-qubit networks with <10 % parameter blowup vs classical. Energy consumption? 1/400th of a comparable GPT-4 fine-tune on the same task.

- Quantum Boltzmann Machines & Generative Models D-Wave + Xanadu hybrid annealed quantum GANs generated photorealistic molecular structures 40× faster than diffusion models in 2025 benchmarks. 2026 scaling pushes that to 10,000× on structured chemistry and materials datasets.

- HHL & Quantum Linear Systems Solvers Go Practical Harrow-Hassidim-Lloyd variants with early fault-tolerance solve 2¹⁸ × 2¹⁸ linear systems in minutes instead of centuries. Direct application: recommendation engines, risk factor models, and PDE-based climate micro-models.

3. The Datasets That Will Break Classical AI in 2026

Big Tech isn’t waiting. They’re stockpiling the exact problems that explode on classical hardware:

- Protein folding ensembles with 10⁹ conformations (Google DeepMind + Quantinuum)

- 2048-dimensional financial time-series for portfolio tail-risk (JPMorgan + IBM)

- 10 trillion-token multimodal pre-training corpora (Meta + Rigetti)

- Global supply-chain graphs with 10⁸ nodes and dynamic constraints (Amazon + PsiQuantum)

Classical clusters will need months and gigawatt-hours to train these. Quantum-enhanced pipelines will finish in days using a few kilowatt-hours.

4. Real 2026 Wins Already Locked and Loaded

These are not predictions — they are internal roadmaps leaked or publicly committed in 2025:

- Google DeepMind will demonstrate quantum-enhanced transformer fine-tuning on a 2048-qubit logical grid, beating H100 clusters by ≥100× in wall-clock time on protein language models (Q1 2026).

- JPMorgan and Aliro Quantum will deploy the first quantum kernel fraud model across live transaction streams, targeting 100 ms latency and 90 %+ recall on zero-day attacks (Q2 2026).

- Merck + IBM will train a 150-logical-qubit quantum generative model that designs synthesizable small molecules with 40 %+ hit rate vs 4 % for classical diffusion (Q3 2026).

- SandboxAQ + NVIDIA hybrid stack will show quantum-accelerated large language model pre-training consuming <5 % of the energy of pure-GPU runs (Q4 2026).

5. The Economic Explosion

ARK Invest’s updated 2026–2030 forecast now puts quantum machine learning at $900 billion to $1.7 trillion in cumulative value creation by 2035, with the steepest curve in 2026–2028.

Why so fast? Because every 10× training speedup compounds. A model that took six months and $200 million in 2025 will take four days and $400,000 in 2027. The delta becomes pure profit — and pure moat.

The Final Truth

2025 was the year quantum machine learning became mathematically inevitable. 2026 is the year it becomes financially unstoppable.

Classical AI will not die — it will be absorbed. Every major cloud provider (AWS, Azure, GCP, Alibaba) has already committed nine-figure budgets to hybrid quantum-classical training clusters launching in 2026.

The leaders who control the first 100–200 logical qubits in 2026 will train the models that define the 2030s.

Everything else — GPUs, data centers, even energy grids — becomes a sideshow.

Welcome to the exponential age of intelligence.

(© 2025 VFutureMedia – Tomorrow Doesn’t Wait

I’m Ethan, and I write about the tech that’s actually going to change how we live — not the stuff that just sounds impressive in a press release. I cover AI, EVs, robotics, and future tech for VFuture Media. I was on the ground at CES 2026 in Las Vegas, walking the show floor so I could give you a real read on what matters and what’s just noise. Follow me on X for daily takes.

Leave a Comment